Hustler Words – A seismic shift reverberated through the artificial intelligence sector this past Friday, as news broke of the Trump administration’s decisive move to sever ties with Anthropic. The San Francisco-based AI firm, established in 2021 by Dario Amodei, found itself blacklisted by the Pentagon. Defense Secretary Pete Hegseth invoked a national security statute, effectively barring Anthropic from defense contracts after Amodei steadfastly refused to permit the company’s advanced AI technology to be deployed for widespread surveillance of U.S. citizens or for autonomous armed drones capable of lethal decision-making without human intervention.

This dramatic turn of events carries significant financial implications for Anthropic, potentially costing the company a contract valued at up to $200 million. Furthermore, a presidential directive issued by President Trump on Truth Social, instructing all federal agencies to "immediately cease all use of Anthropic technology," will preclude the company from engaging with other defense contractors. Anthropic has indicated its intention to legally challenge the Pentagon’s decision.

For years, MIT physicist Max Tegmark, founder of the Future of Life Institute, has been a vocal critic, cautioning that the rapid advancement of AI systems far outpaces humanity’s capacity to govern them. Tegmark, who notably helped orchestrate an open letter in 2014 signed by over 33,000 individuals including Elon Musk advocating for a moratorium on advanced AI development, views Anthropic’s current predicament as a self-inflicted wound. He contends that the company, much like its industry peers, laid the groundwork for its own crisis not with the Pentagon’s recent actions, but through a collective decision made years prior: a persistent resistance to embracing binding regulatory frameworks.

Related Post

Anthropic, alongside industry giants like OpenAI and Google DeepMind, has historically championed a narrative of responsible self-governance. Yet, as Tegmark points out, these commitments have increasingly eroded. Just this week, Anthropic reportedly abandoned a core tenet of its own safety pledge – a promise to withhold the release of increasingly powerful AI systems until their safety was unequivocally assured. This follows a pattern seen across the sector: Google famously dropped its "Don’t be evil" motto and later a broader commitment against AI harm to facilitate sales for surveillance and weaponry. OpenAI recently removed "safety" from its mission statement, while xAI disbanded its entire safety team.

"In the absence of rules, there’s not a lot to protect these players," Tegmark stated in a recent interview, further elaborated on Hustler Words’ StrictlyVC Download podcast. He draws a stark comparison, noting that AI systems in America face less regulation than even a sandwich shop. He argues that companies like Anthropic, OpenAI, and Google DeepMind have actively lobbied against robust AI regulation, insisting on self-oversight. This "complete regulatory vacuum," he warns, mirrors historical periods of corporate impunity that led to public health disasters like thalidomide, tobacco-related illnesses, and asbestos-induced cancers. The irony, he suggests, is that their own successful resistance to external oversight is now backfiring.

Addressing the pervasive "race with China" counter-argument frequently invoked by AI lobbyists – who Tegmark claims now outnumber those from the fossil fuel, pharma, and military-industrial sectors combined – he dismisses it as fundamentally flawed. He highlights that China, far from seeking to outpace the U.S. in all AI domains, is contemplating outright bans on "AI girlfriends" due to concerns about their societal impact on Chinese youth. Furthermore, the notion of racing to build uncontrollable superintelligence to "win" against China is illogical, Tegmark asserts. Both the Chinese Communist Party and the U.S. government would view an autonomous superintelligence, capable of potentially overthrowing national leadership, as an existential threat, not a strategic asset.

Tegmark draws a compelling parallel to the Cold War’s nuclear arms race, where both superpowers ultimately recognized that a full-scale competition to maximize destructive capabilities would lead to mutual annihilation. Similarly, he believes that the U.S. national security community is increasingly recognizing that an "uncontrollable superintelligence" poses a direct threat rather than serving as a beneficial tool. He references Dario Amodei’s own vision of a "country of geniuses in a data center," suggesting that such a concept could soon be perceived as a national security concern.

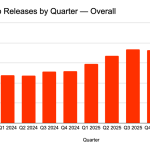

The pace of AI development, Tegmark cautions, is accelerating beyond previous expert predictions. Just six years ago, most AI specialists anticipated human-level mastery of language and knowledge by 2040 or 2050; these timelines have proven drastically underestimated. AI has rapidly progressed from high school to university professor levels in various fields, exemplified by an AI winning the gold medal at the International Mathematics Olympiad last year. Recent research co-authored by Tegmark and other leading AI researchers, including Yoshua Bengio and Dan Hendrycks, defined AGI rigorously, estimating GPT-4 to be 27% of the way there, and GPT-5 a staggering 57%. This rapid progression, he warns, suggests that the advent of highly advanced AI may be closer than many realize, potentially impacting future job markets for today’s students.

The blacklisting of Anthropic now puts other AI giants in a critical spotlight. While OpenAI CEO Sam Altman commendably voiced solidarity with Anthropic, affirming similar "red lines" regarding military applications, Google and xAI have remained conspicuously silent. This moment, Tegmark observes, is forcing the industry to "show their true colors." Despite the current trajectory, Tegmark maintains a cautious optimism. He envisions a "golden age" of AI, where its benefits are realized without existential risks, if companies are subjected to standard regulatory oversight – requiring "clinical trials" and independent expert validation before releasing powerful systems. This, he concludes, represents a clear, achievable alternative to the current unregulated path.

Leave a Comment